If you work with Azure Blockchain especially with the different Azure Ethereum Consortium Blockchain templates and with Metamask, you can usually experience errors in several situations. Examples might vary from Metamask not able to connect to the network, or not able to receive transactions, or you can able to receive transaction but you are not able to send, because it stuck with a pending status or it freezes after one or two confirmations. The most simple resolution is to install an older version of Metamask to the browser. The most typical reason of the error is that Azure Blockchain geth nodes are still in an older release that is not compatible with the most up-to-date Metamask version.

Practical notes on Enterprise software systems and the economics of software.

...by Daniel Szego

|

| |

|

"On a long enough timeline we will all become Satoshi Nakamoto.."

|

|

|

Daniel Szego

|

Showing posts with label Azure. Show all posts

Showing posts with label Azure. Show all posts

Tuesday, January 16, 2018

Friday, December 29, 2017

Azure Blockchain walkthrough - Setting up a Quorum Blockchain Demo

Azure Blockchain platform have some pretty cool solutions to create consortium blockchain solutions. In this walkthrough we are going to demonstrate one step by step guideline for setting up a Quorum demo environment including, configuring the environment and deploy the first applications on top. Quorum is an extension / fork of Ethereum for consortium scenarios, including two useful extensions:

- increased performance with a Proof of Authority algorithm

- private and confidential transactions

- node privacy

- increased performance with a Proof of Authority algorithm

- private and confidential transactions

- node privacy

1. Choosing the necessary Azure Blockchain template: Azure Blockchain has many different templates. Two of them are important for Quorum consortium blockchains: with the help of "Quorum Single Member Blockchain Network" you can create a minimal infrastructure, with "Quorum Demo" a preconfigured demo environment is delivered.

2. Delivering Infrastructure: In this tutorial we deliver the "Quorum Demo" template. It requires the configuration of a standard Azure image with the usual virtual machine sizing parameters. All blockchain specific installation step will be configured in the post configuration.

3. Post configuration of the infrastructure:

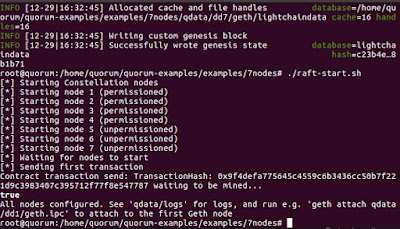

If you see the following screen, the Quorum demo started successfully.

Optionally, before starting the nodes it might be a good idea to pre-allocate some ether. The 7nodes demo uses the genesis.json genesis file. You can pre-allocate ether to the coinbase address with the following command:

4. To test if things working you can attach to the nodes with the help of the Geth console and make further configuration if necessary (make sure that you are in the 7nodes demo folder, if you get permission denied error message use sudo at the beginning, if the ipc file is not found than there was probably an error in the previous step at setting up the network):

geth attach ipc:qdata/dd1/geth.ipc

Optionally, after attachment it might be a good idea to distribute some of the preallocated ether, with the following command:

eth.sendTransaction({from:"0xed9d02e382b34818e88b88a309c7fe71e65f419d",to:"<to_address>", value: 100000000})

5. Testing a pre-deployed private contract: with the 7nodes demo scenario, there is a private contract, called simple storage that has already been deployed at the setting up of the network, you can test as starting two windows and attaching to the node 1 and node 4:

geth attach ipc:qdata/dd1/geth.ipc

geth attach ipc:qdata/dd4/geth.ipc

then configuring the contract

var address = "0x1932c48b2bf8102ba33b4a6b545c32236e342f34";

then calling get function should deliver 42 on node 1 and 0 on node 4:

private.get()

6. Configure Truffle: Truffle must be configured to the environment with a custom network configuration that can be set in truffle.js. It is important to set the public address to the public address of the virtual machine and configure the 22000 (Quorum rpc port to be open):

If you configured correctly, you should be able to start a migration with Truffle. There might be some issues however, as an example, if you Truffle deployment will remain hanging in some scenarios. The reason for that is that the development javascript expects that the transaction was actually mined. As however in Quorum there is no mining, the process might stay hanging. One workaround is to use only one javascript deployment script and based on the transaction hashes check explicitly if the given transaction was correctly validated. Another workaround that sometimes work is to start the Truffle console explicit on a given node and execute the migration from there. And last but not least, do not forget to open the 22000 - 22008 ports on the Azure environment.

7. Configuring metamask with Quorum: configuring metamask with quorum is pretty similar to configuring another given network via the Custom RPC of Metamask. Simply use the IP address and the port with http:

Figure 1. Quorum Azure templates

2. Delivering Infrastructure: In this tutorial we deliver the "Quorum Demo" template. It requires the configuration of a standard Azure image with the usual virtual machine sizing parameters. All blockchain specific installation step will be configured in the post configuration.

Figure 2. Setting up Quorum demo infrastructure

ssh <user>@<ip> -- logging in into the environment

git clone https://github.com/jpmorganchase/quorum-examples.git -- cloning the repo

cd quorum-examples/examples/7nodes -- the 7 nodes demo

sudo su -- changing to root

./raft-init.sh -- initialising the environment (you have to probably use sudo)

./raft-start.sh -- starting the environment (you have to probably use sudo)

If you see the following screen, the Quorum demo started successfully.

Figure 3. Quorum demo started successfully

Optionally, before starting the nodes it might be a good idea to pre-allocate some ether. The 7nodes demo uses the genesis.json genesis file. You can pre-allocate ether to the coinbase address with the following command:

"alloc":

{"0xed9d02e382b34818e88b88a309c7fe71e65f419d": {"balance":

"111111111"}}

geth attach ipc:qdata/dd1/geth.ipc

Optionally, after attachment it might be a good idea to distribute some of the preallocated ether, with the following command:

eth.sendTransaction({from:"0xed9d02e382b34818e88b88a309c7fe71e65f419d",to:"<to_address>", value: 100000000})

geth attach ipc:qdata/dd1/geth.ipc

geth attach ipc:qdata/dd4/geth.ipc

var address = "0x1932c48b2bf8102ba33b4a6b545c32236e342f34";

var abi = [{"constant":true,"inputs":[],"name":"storedData","outputs":[{"name":"","type":"uint256"}],"payable":false,"type":"function"},{"constant":false,"inputs":[{"name":"x","type":"uint256"}],"name":"set","outputs":[],"payable":false,"type":"function"},{"constant":true,"inputs":[],"name":"get","outputs":[{"name":"retVal","type":"uint256"}],"payable":false,"type":"function"},{"inputs":[{"name":"initVal","type":"uint256"}],"type":"constructor"}];

var private = eth.contract(abi).at(address)

private.get()

Figure 4. Live (Quorum Azure) network configuration

You can use the following command for migration:

truffle migrate --network live --verbose-rp

truffle migrate --network live --verbose-rp

If you configured correctly, you should be able to start a migration with Truffle. There might be some issues however, as an example, if you Truffle deployment will remain hanging in some scenarios. The reason for that is that the development javascript expects that the transaction was actually mined. As however in Quorum there is no mining, the process might stay hanging. One workaround is to use only one javascript deployment script and based on the transaction hashes check explicitly if the given transaction was correctly validated. Another workaround that sometimes work is to start the Truffle console explicit on a given node and execute the migration from there. And last but not least, do not forget to open the 22000 - 22008 ports on the Azure environment.

7. Configuring metamask with Quorum: configuring metamask with quorum is pretty similar to configuring another given network via the Custom RPC of Metamask. Simply use the IP address and the port with http:

Figure 5. Metamask configuration for Quorum

Azure Blockchain walkthrough - Setting up Ethereum Consortium Blockchain

Azure Blockchain platform have some pretty cool solutions to create consortium blockchain solutions. In this walkthrough we are going to demonstrate one step by step guideline for creating, configuring a consortium network and develop and deploy the first applications on top.

1. Choosing the necessary Azure Blockchain template: Azure Blockchain has many different templates. Two of them are important for Ethereum consortium blockchains: with the help of "Ethereum Consortium Blockchain" you can create a consortium infrastructure up to 12 nodes in one configuration step. With "Consortium Leader" or "Consortium Member", there is the possibility to do the same configuration step-by-step, with other words dynamically if a new member joins.

2. Configuring the template: After the necessary template have been chosen it has to be configured. Apart from the usual Azure parameters like resource group and administrator accounts, the structure of the network has to be set, like the number and size of the mining and translation nodes. or the number of consortium members. The last tab provides the possibility to configure the Ethereum specific settings, like the network ID or a custom genesis block.

Figure 2. Configuring the Ethereum Consortium Blockchain

3. Creation finished: If the network creation finished, the most important parameters are to be found in the result page:

- Admin-site: a general page about the status of the network, including a faucet as well to distribute some pre-allocated ether.

- RPC-Endpoint: is an important parameter for communicating with the consortium blockchain for example from Truffle or Metamask.

- SSH Info: is important for logging in into the environment and configuring parameters, like most typical for unlocking the coinbase account.

Figure 3. important parameters for accessing the consortium blockchain

4. Unlocking the coinbase account: It might cause difficulties in the future so it is not a bad idea to explicitly unlock the coinbase account. With the help of ssh and the ssh command of the previous configuration window, you can attach to the first node of the ethereum consortium network (note that usually the first node is the transaction node, if it is confidently different, you can log in into further nodes with using -p 3001 3002 ... parameters).

If you logged in, you can use:

geth attach -- to attach to a running geth instance

personal.unlockAccount(eth.coinbase) -- unlocking the coinbase account, the default unlock time is 5 minutes.

eth.coinbase -- getting the address of the coinbase account

personal.unlockAccount('address', 'passphrase', 'duration') -- unlocking the account for a longer time period. If you use 0 as duration, the account will be locked forever.

Figure 4. Metamask configuration

6. Deploying contract: If Metamask set up you can directly or indirectly deploy contracts with the help of remix by selecting "Injected Web3" or "Web3 Provider" at the environment. "Injected Web3" deploys the contract with the help of the configured Metamask account.

Figure 5. Remix configuration

7. Configuring Truffle for the consortium network: if you want to deploy to the new Azure consortium network, make sure that you configured the new network in the truffle.js configuration file. You can get the host name as the Ethereum-RPC-Endpoint from the output window, and you can get the network_id from the initial configuration. You can deploy on your consortium network for instance with the truffle migrate --network azureNetwork command.

Figure 6. Truffle configuration

Monday, July 3, 2017

Governance issues of self service IT

PowerApps, PowerBI and Flow. These are awesome self service IT tools from Microsoft, meaning that creating reports, mobile Apps small workflows or business logic can be created without real coding knowledge just by clicking the applications together by power users. However this direction of self service application development raises serious questions regarding governance. The situation might be similar that happend with Excel and at the early stages of SharePoint as well, where a huge number of unregulated island solution have borne, without the possibility to integrate or scale them up.

Such self service solutions clearly have the advantage that a simple power user without developer or coder exercise is able to deliver a solution. Another advantage is that these solutions can usually be developed at a rocket speed, meaning both the first delivery of the application and the possibility to modify as well. What is missing however is the general governance concept:

- Well defined thumb of rules for authorization: like roles, peoples, groups, the possibility for general authorization guidelines.

- Rights and visibility in the information flow of data: as an example at a simple report of containing average salary of several employees it makes sense to define access rights on the data side defining who is allowed to see the individual salaries and the total sum.

- Scaling the application: most rapid application development environments have architecture limits, manifesting in point in the application delivery where further uses-cases can not be delivered with the same methodology. It is a question that point is reached if there is some integrated solution to implement the further use-cases, like extending exchanging self-service development style with classic programming.

- Migrating between applications: The primary idea of self service IT is to give the possibility for the power-users to "click together" applications. Supposing that we already have some legacy application that we want to more or less automatically migrate to the new platform is usually not supported.

As a consequence, self-service frameworks provide a solution for certain business requirements but several new challenges regarding governance are raised up as well.

Tuesday, May 16, 2017

Comparing enterprise Blockchain frameworks: Hyperledger vs Azure Blockchain as a Service

Current trends of the Blockchain revolution reached from the Blockchain 1.0 version to the 3.0 with rocket speed. As Blockchain 1.0 systems concentrated mostly on the different versions of Bitcoin, like LiteCoin, Dogcoin, Blockchain 2.0 systems tried to extend the original concept to a general programming paradigm. Most prominent examples are Ethereum, Counterparty or RKS.

Blockchain 3.0 systems try to extend or further develop the different versions of smart contract systems in a way that they are applicable for typical enterprise scenarios as consortium Blokchain solutions. Two major examples are Azure Blockchain as a Service and Hyperledger. Both frameworks starts with the basic problem statement that in a real enterprise scenario a pure Smart Contract based system is simply not efficient enough. It does not scale enough for the different enterprise use cases and putting everything from data to business logic into a smart contract is not necessarily a suitable scenario. Despite of the same problem statement they use two fundamentally different approaches.

At Azure Blockchain as a Service (Figure 1) basically a third party SmartContract system has been integrated. Typically Ethereum or different versions of Ethereum, but some other solutions are also possible out of the box at the moment, like Chain or Emercoin. To extend the business functionality an Off-Chain highly secure system is proposed, the so called cryptlets, that are cryptographically secured small programs that are running in dedicated hardware containers, called Enclaves. Crpytlets are planned to realize secure business logic and communication with the Blockchain in two directions: on the hand external input data via Oracles can be securely integrated by Cryptlets, on the other hand Business logic that requires a higher performance but should not necessarily run on the Blockchain can be efficiently implemented. On top, Azure Blockchain as a Service provides some additional elements, like key vault for securely storing keys, or Azure Active Directory integration for identity management.

Figure 1. Azure Blockchain as a Service Architecture

Hyperledger on the other hand redefines the whole Blockchain concept with different building blocks (Figure 2). The consensus mechanism and transaction validation are split into different parts, like Consensus manager, Distributed Ledger or Ledger Storage that provides the possibility to implement different kind of Blockchain or Blockchain style protocols. They usually provide a state based representation that is pretty far from the original UTXO based concept, so probably it is better to speak about a rather Blockchain style protocol. SmartContracts and business logic can be implemented by the so called Chaincode services. They are practically secure nodes, virtual machines, containing a secure container and executing a certain program at each of the chaincode node. They can be implemented in different languages (at the moment is Golem, but other programming languages will be available as well). The framework is extended with additional services as well, like identity management.

Figure 2. Hyperledger Architecture.

The following table tries to summarize the major ideas of the two architectures:

From a conceptional point of view the two frameworks represent two different directions. Hyperledger moves into the direction of defining a general framework and building blocks for implementing different kind of a consortium Blockchain protocols, Azure Blockchain as a Service integrates exiting Blockchain solutions and extends them with a crypto framework to realize any kind of highly secure on-chain - off-chain protocol. In this sense they should not necessarily regarded as competitor technologies to each other, as an example Cryptlet technology can have the realistic use-case for instance to extend a Hyperledger based Blockchain system.

Thursday, February 23, 2017

Cloud technology and the network effect

I must admit, cloud technology has become clearly a winner because of the network effect. Surprisingly not just because of the economy of scale, economy of scope and cost factors but due to the network effect between the different technologies. As an example there is an efficient machine learning algorithm in the Azure cloud, the technology is used in analyzing security breaches of the basic infrastructure to improve security working with data sets from all around the world. The improved machine learning assisted security is used to improve the whole infrastructure of every services, even for the infrastructure of the machine learning part. That is a real network effect of technology integration.

Sunday, February 12, 2017

Deploying Ethereum Studio with Azure Blockchain as a Service, a step by step Approach

So let we create an environment for the Ethereum Studio directly via the azure.portal.

01. The first step is to find the necessary template under the Azure templates. Just search for Blockchain after clicking "New".

02. The first thing is as usual is to configure the basic elements of the virtual machine itself; in this case only one virtual machine as a development sandbox will be initiated.Parameters as resource group, name of the image, user access and location will be defined.

03. The next step is to select the size of the virtual machine, at the moment only one size is available.

04. The next step is to configure the additional features if the vistual machine itself, like network settings, storage account, monitoring or diagnostics.

05. If everything was working fine, we can just confirm in a brief summary windows, and purchase the machine. The virtual machine is created with the preconfigured Ethereum Studio Sandbox. Unfortunately, the Ethereum Studio license is not part of the Azure subscription, so on will be billed for that separately.

It is important to note that there is at the moment the possibility to get a free Integrated Development Environment via ether.camp: ether.camp.

01. The first step is to find the necessary template under the Azure templates. Just search for Blockchain after clicking "New".

02. The first thing is as usual is to configure the basic elements of the virtual machine itself; in this case only one virtual machine as a development sandbox will be initiated.Parameters as resource group, name of the image, user access and location will be defined.

04. The next step is to configure the additional features if the vistual machine itself, like network settings, storage account, monitoring or diagnostics.

05. If everything was working fine, we can just confirm in a brief summary windows, and purchase the machine. The virtual machine is created with the preconfigured Ethereum Studio Sandbox. Unfortunately, the Ethereum Studio license is not part of the Azure subscription, so on will be billed for that separately.

It is important to note that there is at the moment the possibility to get a free Integrated Development Environment via ether.camp: ether.camp.

Deploying a Consortium Blockchain with Azure Blockchain, a Service step by step approach

So let we create an Ethereum consortium Blockchain solutions by Azure. It means practically either clicking together the solution on the Azure portal or initializing the creation by powershell. Let we see how it looks like on the Azure portal.

01. The first thing to is to search for the either the Ethereum or the Blockchain templates them-self. There are 10 pieces of them, we want to use the first one "Ethereum Consortium Blockchain".

02. We can find a brief summarizing about the Ethereum Consortium Blockchain solution under the following link. The configuration itself is carried out in three major steps, as a first step we have to define basic parameters like resource groups, authentication, location, or subscription.

03. The next part is the structure of the network, we can configure here parameters like number of consortium members, number of mining nodes for a consortium member, number of transaction nodes, that are practical gateways of the users to produce transactions. For all nodes, there is a possibility choose the choose the size of the virtual machines and the redundancy of the storage.

04. Last but not least, Ethereum node ID has to be set, it defines the common ID of the network, practically only nodes with the same ID can communicate with each other. On top password of the default Ethereum account and passphrase of the private key generation must be defined.

05. If everything correctly set, the general terms must be accepted and the consortium blokchcain starts to be being deployed.

06. If everything was working without errors, the consortium Blockchain has been created.

07. You find under deployment / Microsoft.Azure.Blockchain ... both the link for the Admin site and for RPC endpoints as well.

Tuesday, August 16, 2016

Artificial Intelligence as a Service - Cognitive Science as a Service

Recent trends in cloud computing shows the direction of integrating several artificial intelligence and machine learning tools into a cloud platform. From the Microsoft side tools like Cognitive Science, Azure machine learning, or Cortana Analytics provide machine learning and artificial intelligence in the cloud. Similarly tools can be found from AWS, like Amazon Web Services Machine Learning. In this sense, it make sense to identify the whole area as Artificial Intelligence as a Service, or rather Cognitive Science as a Service or just simply Machine Learning as a Service.

On the other hand applications can be found as well, that use intelligent cloud services to achieve certain domain specific tasks, like intelligent thread analytics from Microsoft.

Tuesday, July 5, 2016

Cloud Storage versus Dezentralised P2P Storage

Current trends of the decentralized software development makes new applications and platforms to appear every day. One exiting direction is to build decentralized or P2P storage systems on the top of exiting Blockchain technologies, like SWARM on the top of Ethereum. Certainly these technologies are pretty much in the experimental phase, despite it is interesting to evaluate which advantage or disadvantage can have a P2P storage system for example comparing with a classical cloud storage.

- Zero downtime: well cloud systems have got surely the high availability characteristic. The same property can be found however at a P2P storage as well. Copies of a document or data is generally stored on a lot of nodes: if an adequate distribution algorithm is implemented to store copies based on availability and geographical location of the nodes, than high availability can be guaranteed.

- Fault tolerance: The same is true for fault tolerance. Adequate distribution of the copies of different pieces of information on different nodes can realize a highly fault tolerance system, similarly as at a Cloud storage.

- Scale up - Scale down: well from the point of scaling up or down the two systems have got more or less the same characteristic. One can always get some more storage with a couple of clicks, one can set some storage free similarly.

- DDoS resistance: Well Cloud is more or less centralized or at least based on several huge centralized cloud center, as a consequent they are not so immune for a DDoS attack as a fully decentralized P2P network.

- Censorship-Resistant: Possible censorship is a major characteristic even of a cloud storage system. As the cloud service itself is operated by a large company like Microsoft or Amazon, there is always an easy possibility for censorship. On the contrary on a P2P system for a successful censorship at least 51 or perhaps 100% of the resources are required that is economically pretty expensive.

- Security: Security is a big issues for a P2P storage system. As our data is stored overall of the world, the only way to provide professional service is that the data itself is so highly secured that even the hoster of the node can not make the decryption. As it is theoretically possibly to realize such a strong decryption mechanism, the question is if accessing the data remains performant enough.

- Price: The second big question is the price of such a system that can be influenced by two factors. On the one hand a P2P storage can be more expensive than a Cloud storage as most of the data are stored in more copies. On the other hand, a P2P storage can be cheaper as well as most of the resources are extreme cheap, meaning that they are stored on resources that otherwise would not be utilized.

As a conclusion, I would say the major risk is the security - performance characteristic. If these two properties can be realized in a way that is comparable with a Cloud storage or at least acceptable to the market, than there is a chance that in a year or two decentralized P2P storage services appear.

Tuesday, May 17, 2016

Software as a Service in the cloud à la Azure

Well Azure software as a service portfolio seems to boom. One finds new services and solutions every day. Examples are for instance, PowerApps for mobile application development, Flow as a rule Engine, Microsoft Forms, Power BI for Big Data, GigJam for data visualization, Sway for visualization as well and who knows what comes the next week.

To analyse this chaos, we try to categories these services with two dimensions:

1. Targeted Customer Segment: sometimes it is pretty difficult to identify for the first run, which customer segment is targeted by an Azure tool. Is it a tool that is planned for end-customers, is it rather a tool for small businesses or is the customer segment really enterprise. As an example Sway seems to be a real end customer segment, create your content, share with your friends, be happy. On the other hand tools like PowerApps or PowerBI planned rather on a business segment.

2. Possibility for customization or integration: The second dimension that should be considered is the possibility of customization or integration. Some tools like Sway are rather out of the box products, without the possibility of customize too many things or integrate with other SaaS solutions. Other services like Azure Machine Learning provide pretty much possibility to customize with, or without scripts or create integration via rest api with other services of Azure. In this sense they have many characteristic with Platform as a Service solutions. Perhaps, Framework as a Service would be an adequate name.

Certainly, the whole area is changing pretty fast, so not only the current positioning of a service is important, but the future direction as well. As an example, the mobile App development framework Project Siena was focusing on the end-customer segment, it has been further developed for the enterprise segment and renamed as PowerApps.

We try to summarize some of the SaaS products on the following picture. Certainly the exact characterization of a certain Azure service based only our fast subjective analysis.

Figure 1. Azure SaaS Portfolio Analysis.

Subscribe to:

Posts (Atom)