Programming languages and software platforms represent an interface technology between the Turing machines of the computer and the Neorotex of the human brain. It means that they should be designed as much to the computers as to the humans. As an example good software frameworks should be represented as conveyor chains to the humans: having an explicit number of steps that can be parametrized by people in a logical order, having at each step only an explicit number of possible choices. It is not because, that is the way how the computer can best handle things, it is because, that is the way how humans can handle things. The design can be further fine-tuned with considering further limitations of the human thinking, like having the 7+-2 limitation of the short term memory, an optimal conveyor chain might contain the same amount of steps and each step might have 7+-2 input elements as well. Considering the fact that the Neocortex is pretty much hierarchical the chain should can be built up in a hierarchical way containing sub-conveyor chains in each step, up to 7+-2 steps. The whole structure might contain elements not only from the Neocortex but from the human collaboration and social interactions as well. As an example, there might be different teams working on different different steps of the software with different competence areas and the collaboration of the teams might directly be supported by the software framework itself.

Practical notes on Enterprise software systems and the economics of software.

...by Daniel Szego

|

| |

|

"On a long enough timeline we will all become Satoshi Nakamoto.."

|

|

|

Daniel Szego

|

Showing posts with label software development. Show all posts

Showing posts with label software development. Show all posts

Saturday, December 22, 2018

Monday, November 12, 2018

Fabric composer tips and tricks - not updating without error message

Working with fabric composer, you can sometimes get the phenomena that some item is not being updated, however there is no error message or anything, the transaction is executed without any problems, only something is not updated. This can be caused by exchanging the getAssetRegistry with the getParticipantRegistry statement. If you experience such a phenomena, check if you try to update assets with the getAssetRegistry and update participants with the getParticipantRegistry statements.

Tuesday, November 6, 2018

Solidity as a consortium blockchain programming language

There are many initiatives of using solidity as a blockchain programming language not just in Ethereum but in many other mostly consortium blockchain solutions. On the one hand, this is a logical direction, as most of the developers who has blockchain programming experience have the experience with solidity - Ethereum. On the other hand, most of the existing blockchain applications are realized with the help of Solidity so they might be migrated this way to a consortium platform without any modification.

Despite the direction is perhaps not absolutely optimal. On the one hand, Solidity was one of the pure Blockchain oriented programming language and certainly it is good for a first initiative but it is perhaps not so optimal on a long run. It has several "child illnesses" that should be repaired on a long run, like the chaotic type system or payment functions. On the other hand, programming on Ethereum with Solidity supposes indirectly very strong constraints in terms of computation and gas. If the same use case is put on the top of a consortium blockchain, where gas is practically free of charge, the application should be implemented probably totally differently, even if the programming language is the same.

Wednesday, October 10, 2018

Notes on the difficulty of smart contract programming languages

The idea that blockchain programming should be done by anyone is pretty dangerous. The problem is that programming for an immutable ledger for applications that probably store currency and has a lot of business values is difficult. It requires a lot practical and theoretical background, programming skills and quality assurance. Non-statically typed languages, like Javascript provide the possibility for everyone to implement fast small programs, however the language will be very soon a problem as one wants to implement either large scale or mission critical programs. For such highly secure, highly mission critical applications very strictly typed languages with formal verification and strong testing methodologies must be used.

Monday, August 27, 2018

Blockchain strategy and bootstrapping the ecosystem for developers

With the appearance of the newer and newer blockchain platforms, every company tries to position and bootstrap the platform differently regarding the developer community and attract more and more developers the work with the platform. Strategies might vary as:

- creating a brand new platform with a brand new language: the best example for that is solidity, as it was one of the first language for blockchain development it made sense to invent a brand new language. Similar attempts were initiated by Vyper or by the modelling language of Hyperledger Fabric Composer.

- supporting a well-known language: many platforms tries to use a well-known language which was previously widely used by programmers, like Java or Javascript, and attract as much developers from the given language as possible. Similar strategy is the choice of Java for Hashgraph, or the Java and Javascript for Hyperledger Fabric.

- last but not least, there are platforms that support multiply programming languages like Tendermint or Babel, trying indirectly attract everybody who is a developer throughout the world.

The strategy can be however extended. As the aim should not be be of any such a platform to attract as much developer as possible, but as much application builders or applications as possible. In this sense strategical direction can be to attract business users instead of developers and provide no-cost or low-cost application development environments. Another idea might be to provide an interface or possibility to integrate different exiting business applications, or use directly a domain specific languages for modelling businesses integrated with the blockchain platform, like different BPMN notations.

Friday, March 3, 2017

Notes on hybrid machine intelligence systems and system design

What is sometimes missing from the machine intelligence systems is that machine intelligence is an integrated part of the system design. Let we think of a complex IT system. It could be pretty much systematically designed in a way that certain parts are self-adaptable entities, meaning that they can be systematically trained and other parts are rather fixed. It is a general system design question as well which parts of the information system can be trained or for which parts of the information system make sense to be trained.

So as a basic system model let we imagine something object oriented system in which we have several objects or entities that practically contain some properties functions or constraints. As the most simple case let we imagine a self-adaptable entity as a set of {P1,P2, .. PO} general properties and a set of {F1,F2, .. FO} functions, in which each function maps some input parameters to some output ones based on the general properties. These functions are however not fix implemented but they are trained with some measured data. There might be a continuously learning designed, however it is probably a better system design to make a separation between different stages, like:

- performing phase: in which the system is working with static functions.

- learning phase: in which the system is adapting based on some measured or generated data.

- new performing phase: the result of the previous learning phase is used as static function, implying practically a new release of the system.

The functions themselves might follow different machine learning patterns, ranging from classical stochastic functions via artificial neural networks to more symbolic decision tree learning algorithms.

The model can be approved further to consider not just self-adaptable entities but self-adaptable connections between different entities or even inheritance between entities.

- performing phase: in which the system is working with static functions.

- learning phase: in which the system is adapting based on some measured or generated data.

- new performing phase: the result of the previous learning phase is used as static function, implying practically a new release of the system.

The functions themselves might follow different machine learning patterns, ranging from classical stochastic functions via artificial neural networks to more symbolic decision tree learning algorithms.

The model can be approved further to consider not just self-adaptable entities but self-adaptable connections between different entities or even inheritance between entities.

Sunday, February 12, 2017

Creating simple name service on the Ethereum Blockchain

Let we implement a simple name service on the Ethereum Blockchain. The service is manifested by one smart contract, called NameService, providing functions to register a name for an address, getting an address for a registered name and rewriting the address of an already registered name. The accessibility is pretty simple, everyone can create a new DNS entry but an existing item can only from the owner of the address overwritten.

Testing the service can be done for the first run simply in Ethereum Studio sandbox, like registering a name and testing if the name can be read back.

Testing the service can be done for the first run simply in Ethereum Studio sandbox, like registering a name and testing if the name can be read back.

Let we test the case from javascript side as well, extending the app.js side of the project with two simple javascript functions to read out and write the name service and some html code that integrates the code on the user interface.

Testing the result from the Javascript side, we get the same result as from the Ethereum Studio.

Saturday, January 14, 2017

Creating a simple job shop smart contract on the Ethereum Blockchain

Let we create a simple job shop smart contract. For the first run, let we create a smart contract called Job having some variables like description, responsible for the job, an internal jobID and a state of the job.

On the other hand, let we create a JobShop smart contract that practically uses a mapping for storing the different jobs mapped to a fictive primary key called jobID and having two functions for creating and assigning jobs for an account.

After having the job task or job address, the job status can be set to done. In real life scenarios certainly this simplified functionality can be extended with for instance more variables, events raised if a job has been finished or with delegation and reporting functionalities.

Friday, January 13, 2017

Analysing optimal technology choice for a project based on agile technology curve

Considering a usual enterprise software architecture, we can usually consider three phases: There is usually a setup phase of the technology that is pretty slow and expensive despite covers not too many use cases (Q1). Most software development frameworks have an agile part in the middle that provides the possibility to cover uses cases very fast very agile on a low cost basis. At the end, there is usually an architecture limit of the technology where the basic framework has to be further developed (Q2). A relative big effort is usually required to implement uses cases after the technology limit implying slow and costly delivery (Figure 1).

Figure1. Agile technology curve and the performance of a project.

Let we consider the above curve from another perspective, let we consider a P project with a set of uses cases and let we analyse the question if a given T technology is an optimal choice for the project. We can say:

- A technology is under performing for a given project or a set of use-cases if only a small part of the agile part is used. In this case the technology is simply not used enough, considering the pretty expensive set up phase. In this case another technology is perhaps a better choice.

- A technology is optimal performing for a given project or a set of use-cases if a large part of the agile phase is used to cover the use-cases, meaning simple that setting up cost at the beginning pays off. This case is the optimal technology choice,

- A technology is over performing for a given project or a set of use-cases if many use cases are implemented outside the technology limit, meaning that the project will be probably unnecessary expensive and slowly delivered because of the use-cases outside the technology limit. In this case another technology is perhaps a better choice

- A technology is not performing for a given project or a set of use-cases if we do not use anything from the agile middle phase of the technology, meaning that after setting up the software framework, use-cases only outside the technology limit are implemented. In this case another technology is surely a better choice

Thursday, January 12, 2017

Creating a simple order process on the Ethereum Blockchain

Let we create a simple order workflow on the Ethereum Blockchain. The workflow imitates an order request, containing information on which material was ordered, how many items and on the order price. We define two smart contracts, on the one hand similarly to our previous example we define an ApprovementActivity. The activity realizes the usual Approve, Reject and Delegate functionalities as in our previous example, However the functionalities are extended with a callback function, that informs the original workflow as soon as the internal state has been changed. Additionally, there is an event that is raised to the client side, as soon as a new Approval set.

Code of ApprovementActivity is the following:

Code of ApprovementActivity is the following:

Workflow itself is realized by another smart contract, called OrderWF. The workflow is pretty simple, it is initiated with the necessary input parameters, sets the initial state and creates an activity to be approved. Communication regarding the state changed is realized by the callback function. As a second possible communication way a reference for the original workflow has been given over to the Activity, however this functionality is currently not used.

Certainly the process just an over simplified prototype version. For a real scale solution additional functionalities should be built up, like reading the order details from the ApprovementActivity smart contract, working rather with groups than with individual addresses, fine tuning permissions and so on...

Wednesday, January 11, 2017

Communicating with external datasource on the Ethereum Blockchain

Calling external API from a Solidity smart contract is basically not possible. However there is an indirect way to communicate with external data sources via internal variables, functions and events. Let we assume that we want to call an external API with three string parameters, parameter_1, parameter_2 and parameter_3 and expecting returning a ReturnValue string. The only way to do it is to raise an event, implement and event handler on the client side to make the necessary call for the event and write the resulting variable back to the smart contract.

A simple smart contract for the implementation can be seen int the followings:

Certainly the template realizes efficiently only one call. If several calls are executed paralleled than further considerations are required.

Several considerations have to be done however regarding security. One of the main idea behind Solidity is that everything is controlled by a decentralized consensus. If external data is used by a Blockchain application it is something that is not part of the decentralized consensus, implying a way for cheating or hacking the whole application. As a consequence extensive use of external data is surely not proposed for a Blockchain application.

Several considerations have to be done however regarding security. One of the main idea behind Solidity is that everything is controlled by a decentralized consensus. If external data is used by a Blockchain application it is something that is not part of the decentralized consensus, implying a way for cheating or hacking the whole application. As a consequence extensive use of external data is surely not proposed for a Blockchain application.

Creating a simple task for an approvement workflow on the Ethereum Blockchain

Let we assume that we want to implement a simple approvement workflow, practically containing one user task with a responsible person to be approved and there are three possible outcome of the task: approves, declined or delegated. For the sake of completeness, we assume that functionalities for logging and access method control are already available, as we described them in the previous blog, The approvement process has one special information field in our example ApprovementInformation that is the information to be approved. In real case scenarios this information is best realized by an explicit smart contract.

The implementation is pretty much straightforward. The state is stored in the workflowState variable and modified by the Approve and Reject functions. Responsible person of the workflow is stored in the WorkflowResponsible variable; in current implementation only responsible person of the task can accept or decline, however everyone with a write access is allowed to delegate the task for someone else.

Tuesday, January 10, 2017

Combining data governance and permissions on the Ethereum Blockchain - a basic approach

As we have seen in the previous couple of blogs, there is a possibility both creating permissions on the Ethereum Blockchain and implementing basic data governance protocolls as well. Now we try to combine the two approaches into an inheritance hierarchy to cover both approaches.

The simplest way is to define an inheritance hierarchy starting with the basic logging - governance functionalities.

As a second smart contract let we define a descendant of the Governance smart that implements only the access modifiers.

As the next descendant we define the administrative functions for giving access to a user. It is important to note that this functionality must already be set by the modifiers making sure that only accounts with the necessary right can set further rights. Considering governance, there is the possibility to monitor both the attempts of access and the succeed of access. It is realized by the AdminAccessRole input parameter in our example.

Last but not least, custom functions can be set by the necessary modifiers, both ensuring that only the account with a necessary right can access to the functionality and to make sure that both the access attempts and the successfully attempts are logged.

Monday, January 9, 2017

Basic data governance on the Ethereum Blockchain

Let we assume that we have several information on the Ethereum Blockchain and we want to realize some kind of a governance. For the first run, we want to collect and monitor the information who had access to some of the critical data and when. The easiest way is to implement an event type for logging. It provides both the possibility to log into a specific storage area and the possibility that the event is raised online on the client side (for instance in a javascript UI).

Lat but not least, the functions that are critical, like access to the critical variable in this example can be extended by the modifiers.

Certainly, the pattern works much better with inheritance, like implementing the modifiers in an ancestor smart contract and simply using them in a descendant contract. It is important to note that Ethereum is a public Blockchain. As a consequence, in this example even if getVariable is explicitly logged, there is a little bit hacking way to read out the information from the ledger itself without using getVariable.

Using permissions on the Ethereum Blockchain with Smart Contract Inheritance

As we have seen in the last two blogs, there is a possibility to use access for functionalities for na Blockchain on permission basis and on group and permission basis as well. One way to use the functionality efficiently is with the help of Smart Contract inheritance. The modifiers and the logic are implemented in an ancestor class, as a consequence they can be used on the exactly same way in the descendant class as well.

Creating groups and permissions on the Ethereum Blockchain for a SmartContract

We briefly covered in the last blog how to create permissions for accessing information on the Ethereum Blockchain. Let we extend the model for administrating the access rights not on the individual user (address) basis, but with the help of groups and group membership. We are presenting only a simply version as a prototype; certainly more complex solutions can be built as well.

As usual, let we create a smart contract, some state variables to access, some elementary access level presented by an enum and two mappings: groupAtomicRoleMapping for mapping an atomic role for a group, and groupsForMembers for mapping list of members for a group name. A constructor is also presented that creates the first Administrators group with an Admin atomic access level and puts the current user into the group.

Secondly let we create four modifiers to check if the the account calling a function in a group having Read, Write or Admin atomic access. Additionally one modifier checks if the account is the owner of the Smart Contract.

As a next step some functions are implemented to access to the data of the smart contract and setting access to groups or adding users to group. All functions are modified based on the right modifiers.

Last but not least, a help function is implemented for string comparison and a destructor as well.

First testing of the contract can be done in the browser version of solidity. As an example after creating the contract with Create (default zero constructor) we get exception for services that require Admin access. (Please note that in this example there is a type error for the AccessWithGroups constructor. In this way we make tests with or without executing the functionality in the constructor).

After calling the constructor AccesWithGroups constructor explicitly, the administrator group is created set with Admin access and the current account is put into the group. As a consequence we have access for functions like setGroupAccess.

Certainly real life examples would require more considerations, like implementing functionality for adding or deleting groups, having the possibility for a group to have more than one atomic access level or just implementing error checking into the existing functions.

Sunday, January 8, 2017

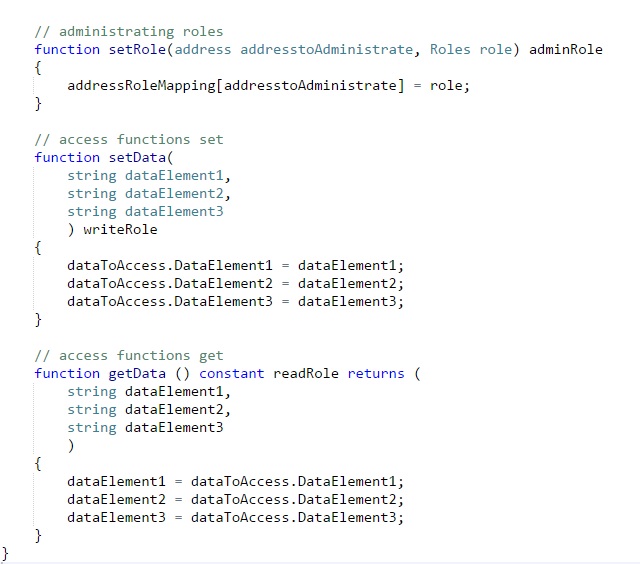

Creating permissions on the Ethereum Blockchain with SmartContract

Let we create a simply example for a permissioned Smart Contract on Ethereum Blockchain. Let we assume that we have the following atomic level of access:

- Read: reading information from the state of the Smart Contract.

- Write; Writing information to a Smart Contract.

- Admin: administration the roles.

- Read: reading information from the state of the Smart Contract.

- Write; Writing information to a Smart Contract.

- Admin: administration the roles.

Let we assume that the Smart Contract has a couple of variables in a struct for storing internal information an enum for storing atomic access information, a mapping for storing the access information for each account and and a constructor that guarantees that the creating account is registered as Admin.

Let we create as the next step three modifiers to guarantee that certain functions can be called only by the necessary rights.

Last but not least, we use the modifiers in the service functions to make sure that only people with the necessary right are able to reach the given service, In our example, we have three services: for reading out data, for writing data and for administrating access.

Certainly, getData functionality does not really cover the expected functionality. As Ethereum is a public Blockchain, the data can be practically read out from the Blockchain even if the getData function can not be explicitly called.

Friday, January 6, 2017

Setting up Ethereum development environment - tools

Being new in a programming language and environment it is sometimes a difficult to set up an initial development environment. We try to summarize the most important tools in the followings:

- lightweight development environment for coding in solidity.

- good tool for developing and manually testing small contracts or simply to practice the language.

- no-live Blockchain integration

- I would say this is the infrastructure part of Ethereum: fancy user interface for managing accounts, smart contracts, deploying into the test or live Blokchcain or simply initiating transactions between actors.

- This is another tool on the infrastructure side. Geth is a command line tool for similar purposes as the Mist browser, like creating accounts, deploying contracts to the Blokchain or transferring ethere between different accounts. It provides however extended functionalities. like setting up a private Blockchain or accessing to some of the detailed cryptographycal elements of an account.

- It is an online monitoring tool for the live Ethereum Blockchain, providing the possibility to see accounts or transactions.

Ethereum Studio (http://blog.ether.camp/post/142794388568/ethereum-studio-is-ready-for-you, or https://live.ether.camp/):

- Ethereum Studio is a complex online integrated development environment to develop and test Blockchain applications, including both solidity and other javascript elements.

Additional practical tools (http://ether.fund/tools/):

- There are some additional tools that are nice to use, like Ethereum unit converter.

Wednesday, January 4, 2017

Blockchain as a storage

From a technical point of view Blockchain can be regarded as some kind of a special storage system. It is much slower as for instance a replicated database system, however due to the P2P architecture the availability can be actually higher as at a replicated database. Certainly the 'hacking resistance' is much higher at a Blockchain solution as with other solutions, and of course a Blockchain is far less efficient (Figure 1).

Figure 1. Blockchain as a storage.

Sunday, December 18, 2016

Towards unmanned software development and delivery

In the good old days custom software projects were simply. there were some kind of an initial interaction with the customer called as specification or analysis then developing and architecting a solution and at the end some kind of a delivery. Independently if the project was running in a classical waterfall style or something more fancy agile style software projects had the following roles:

- Business Analyst / Industry Business Consultant: to help for the customer to create / update / finalize the specification.

- Software Architekt / Developer : to implement the software itself.

- Infrastructure expert: to deal with special infrastructure oriented questions.

- Tester: to quarante software quality.

- Project Manager / Scrum Master: to coordinate the whole process.

- Sales: selling the software.

- Sales: selling the software.

In the meantime complex development frameworks appeared as well making a custom software development from the end customer point of view simpler, usually reducing the development efforts and making possible a much faster agiler and bug-free software delivery. Typical examples for these frameworks:

- Rapid application development frameworks: these are practically rather development oriented frameworks that make the coding much faster, implying in less coding resources. Examples were for instance the Enterprise Library for .NET framework.

- Complex Business Solutions: There are a lot of initiative to provide a framework with many use cases out of the box, starting from the most common user and identity services to complex business applications or apps implying reduced again the need for reduced resources in the classical technical coding - testing fields. Example is Odoo Business Apps.

- Self-Service Solutions: Last but not least there are many solutions that work in a self-service manner, providing the possibility for end-customers without prior development or even IT knowledge to build up software applications themself. Examples are elements in the Azure cloud, like PowerApps or PowerBI.

Certainly there is a catch using these frameworks. Even if they are theoretical self-service or providing a lot of functionality out-of-the-box, they reach in real life a highly increased technical complexity. So configuring these frameworks usually require pretty much know-how, as a consequence delivering solutions with such frameworks require highly increased IT consulting (Figure 1).

Certainly there is a catch using these frameworks. Even if they are theoretical self-service or providing a lot of functionality out-of-the-box, they reach in real life a highly increased technical complexity. So configuring these frameworks usually require pretty much know-how, as a consequence delivering solutions with such frameworks require highly increased IT consulting (Figure 1).

Figure 1. Roles and resources in different IT projects.

So the question is in which direction can we imagine the further development of custom software development and delivery, I think there are three major factors that should be considered:

- Sharing economy: As software are not necessarily developed from the zero but rather half-ready parts are configured together sharing parts of the software will play an always increasing role. Something similar is already happening on the cloud backend side, instead of individual software, everyone try to concentrate on creating and sharing (or selling) micro-services. On the other hand, certain software configuration frameworks like Azure PowerApps already contain built-in feature to share part or the whole application. It is important to note that sharing does not only make an application available, but the collected domain and industry know how as well. As an example supposing that I spent 20 years in the logistics field and I create and publish my custom logistics application then indirectly part of my collected domain know-how will be shared as well.

- Electronic Markets: Electronic markets are similar to sharing economy, making possible that the collected knowledge is used by someone else. Direct applications of the market can be selling and reselling building blocks of a software or whole ready to go solutions.

- Machine Intelligence: Despite machine intelligence is pretty much in an over-hype-cycle at the moment, it can be used to analyzing or refining specification of a software. Creating the first requirement analysis with machine intelligence is certainly difficult, although there are some attempts for that like in process mining. If a version of a software set up however it is simple to monitor the usage of the software itself, analyzing the data with machine learning and proposing a new better version of the software automatically. Example for such a framework is the Gird, fully automated web pages based on machine learning of web traffic.

- Robo advisors: To decrease the complexity of a configuration framework from an end-customer perspective and eliminate the need of IT consultancy Robo-advisors can be used. Although such advisors are more typical in the fintech field at the moment, it is not illusory to imagine that similar technology can be implemented in the software development branch.

So as a conclusion let we imagine perhaps in the not far future a classical software development example as an end-customer needs some kind of a custom software for supporting his business processes. Let we investigate which human resources and roles do we need for that:

- Do we need Business Analyst - Software Architekt - Business Consultants for creating and delivering a custom software solution ? Well, not necessarily, firstly ready-to-go software solutions can be bought via online markets or simply used via sharing platforms. If the end-customer does not find a ready ready solution, custom software solution configurations can be used in a self-service manner with the help of robo-advisors and machine learning to create the necessarily solution.

- Do we need Developers - Software Architects or Testers for creating and delivering a custom software solution ? Well, not necessarily, If we work with configurable software solution frameworks, most software is produced on a self-service manner. Certainly such frameworks have to be implemented first in a classical way, however if they are created already, concrete software solution development can be realized without developers, software architects or testers.

- Do we need Infrastructure experts for creating and delivering a custom software solution ? Well, not necessarily, if everything is hosted in the cloud than minimal infrastructure expertise is required.

- Do we need sales people for selling a custom software solution ? Well, not necessarily. if everything is sold via online markets and application stores than further sales support can be avoided.

- Do we need Project Manager for creating and delivering a custom software solution? Well, not necessarily, if we consider the previous points the only human in the software delivery process is the customer, so project management is not required.

Subscribe to:

Posts (Atom)